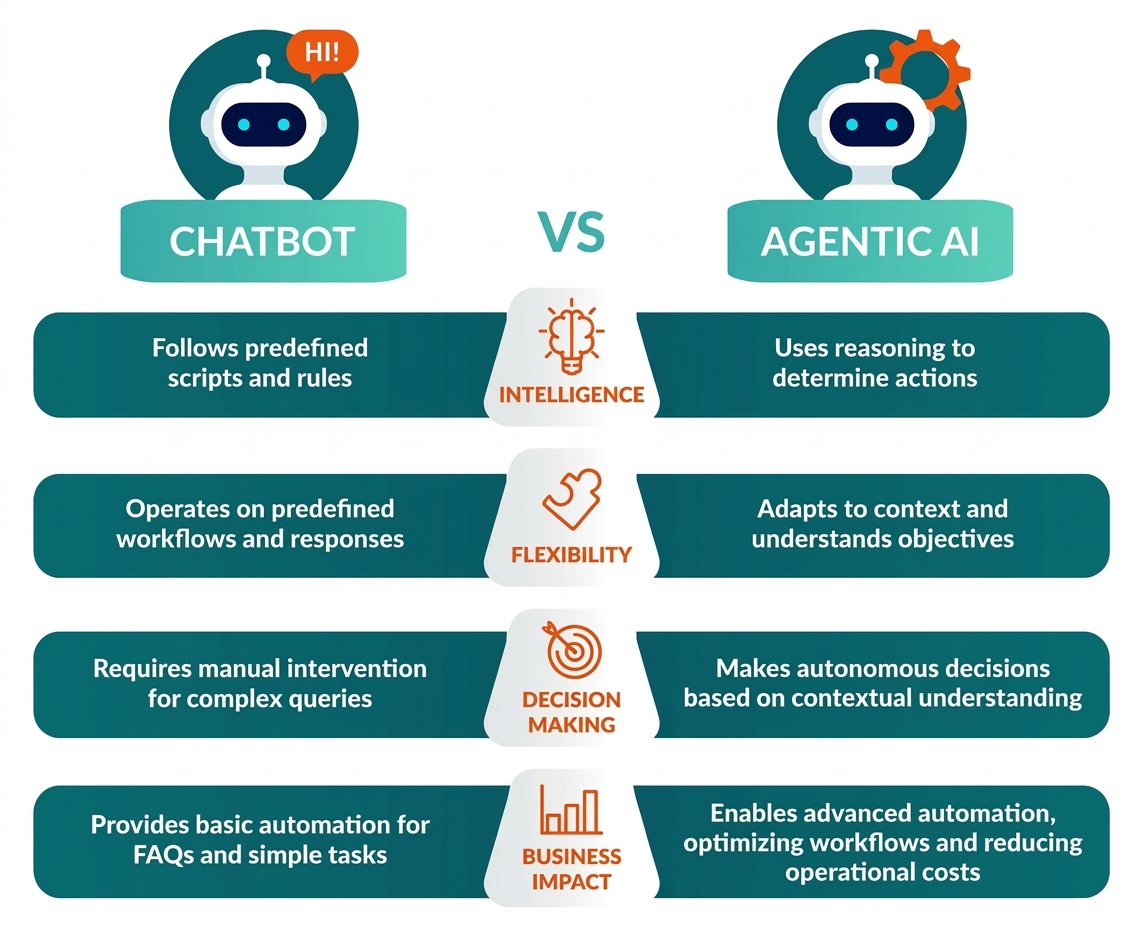

For a long time, the word AI assistant was almost synonymous with a chatbot - a simple window where users typed a question and received an answer. These systems were useful, but fundamentally passive. They waited for input, generated text, and stopped there.

Today, that model is rapidly becoming outdated. Modern AI systems are evolving into agents - systems that don’t just answer questions but reason, make decisions, and perform actions. This shift represents a major change in how AI is integrated into data platforms, applications, and enterprise workflows.

In short: the industry is moving from conversation to capability.

Chatbots: intelligent, but passive

Traditional chatbots are essentially language interfaces on top of knowledge. Their main job is to interpret a question and generate the most relevant answer.

A good analogy is a librarian.

Imagine walking into a library and asking:

"How do I process streaming data with Apache Kafka?"

The librarian listens, understands your request, and points you to the right books or documents. That’s helpful - but the librarian does not write the code, deploy infrastructure, or analyze your data. Most chatbots work in exactly the same way. Even when powered by modern large language models, their role remains largely passive: They interpret prompts, retrieve or generate information and respond in natural language but they do not act.

This limitation becomes clear in data engineering environments. If you ask a chatbot:

"Check yesterday’s pipeline failure and fix the issue."

The chatbot may explain how to investigate the problem - but it cannot actually inspect logs, trigger workflows, or modify configurations.

To move from explanation to execution, we need something more.

AI Agents: systems that reason and act

AI agents represent the next step. Instead of simply responding to prompts, they combine reasoning with the ability to take actions in external systems.

A better analogy here is a personal assistant with real permissions.

If you ask your assistant:

"Investigate yesterday’s pipeline failure."

They don’t just explain what to do. They check the monitoring dashboard, review the logs, identify the failure point, restart the pipeline and send you a summary. That’s exactly how AI agents are designed to work.

An agent typically operates through a loop:

- Reasoning - interpret the goal and plan the steps required

- Action - call APIs or services to perform tasks

- Observation - analyze the results

- Iteration - refine the plan until the goal is achieved

This cycle transforms AI from a text generator into an operating system component.

Reasoning is the new control plane

At the core of modern AI agents is reasoning. It’s not about generating eloquent answers, but about planning what to do next. An agent essentially asks itself a series of questions: What is the objective? What information do I need? Which tools can help me? In what order should I use them? And did the last action actually work? This process turns a language model into a decision-making engine rather than just a system that generates responses.

In practice, reasoning acts like a control layer that coordinates different parts of the system, such as data access, business logic, external services, and feedback loops. That’s why agents can go beyond simple chat interactions and support more complex workflows.

Action Handlers: where intelligence meets reality

The defining capability of AI agents is their ability to act, and this is made possible through Action Handlers. An Action Handler is a structured interface that allows an AI agent to execute operations in external systems. In practice, it acts as a wrapper around a real function or API. Typically, an Action Handler includes a description of what the action does, which is used during reasoning, a defined input schema specifying required parameters, and an execution function that performs the operation.

This structure enables agents to decide when an action is needed, provide the correct inputs, and trigger execution in real systems. Through Action Handlers, agents can call REST APIs, execute cloud functions, query data warehouses, trigger workflows, and interact with internal services. From an engineering perspective, Action Handlers form the bridge between reasoning and execution. Without them, AI remains conversational. With them, AI becomes operational.

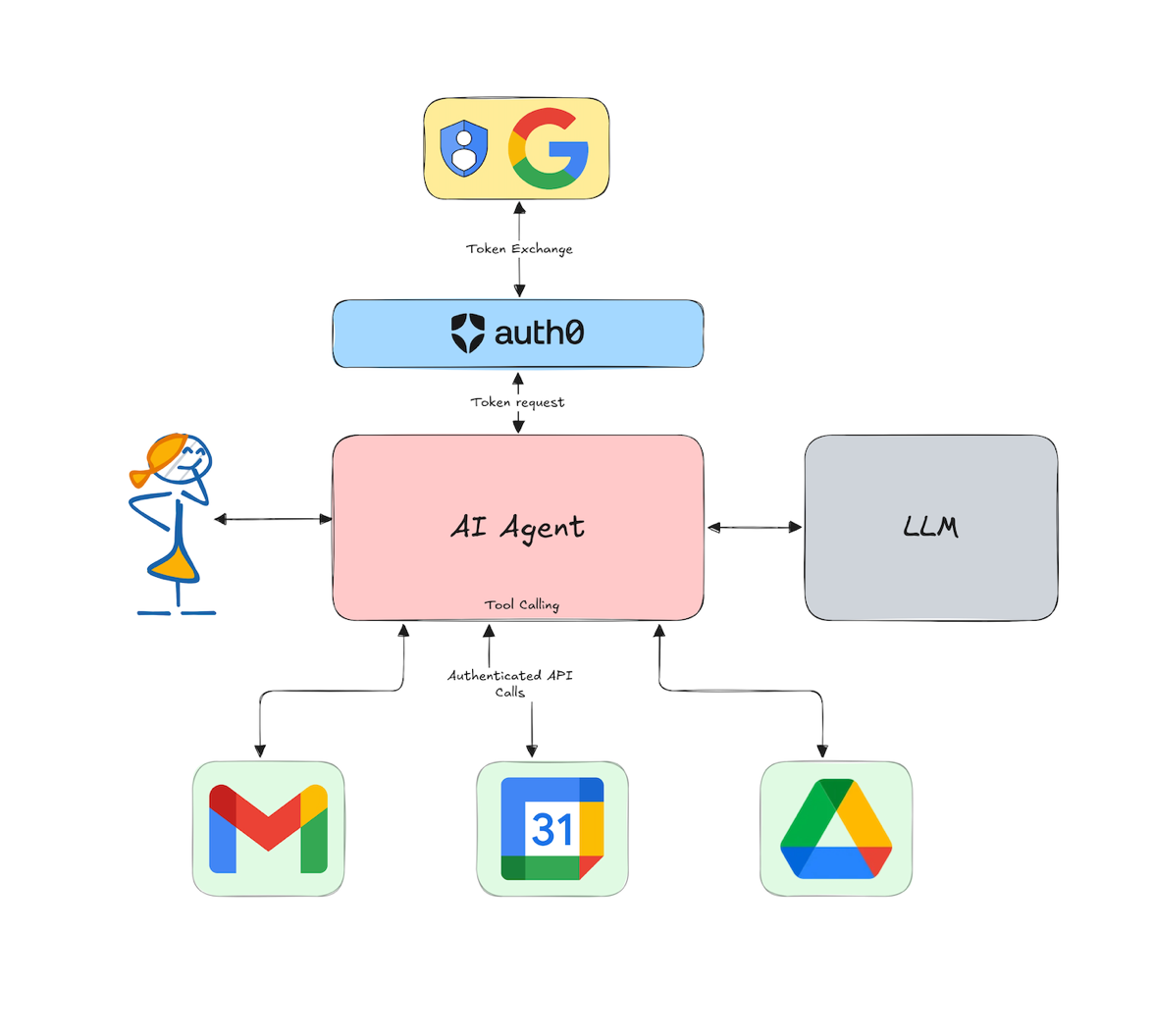

Security in AI Agents: trust, but verify

As AI agents gain the ability to interact with real systems, security becomes critical.

Unlike chatbots that only generate text, agents can trigger workflows, query databases, and modify infrastructure. Without safeguards, this creates real risk. The key principle is least privilege. AI agents should only have the minimum permissions required.

Best practices include exposing controlled APIs instead of direct system access, enforcing validation, logging, and rate limits, using Action Handlers as security boundaries, and monitoring and auditing all agent actions.

Organizations should treat AI agents like any production service. They should be tested in sandbox environments, monitored in operation, and logged at every step. With proper governance, agents can safely automate complex workflows.

From Chat UI to system architecture

This shift changes how AI systems are designed. Chatbots typically live in the UX layer, such as support widgets, FAQ assistants, and knowledge interfaces. AI agents operate deeper in the stack, interacting with orchestration layers, data platforms, automation systems, and decision engines. Platforms like Vertex AI Agent Builder reflect this transition. They are not built to create better chatbots, but to design systems that can reason and act.

Why this matters for Big Data organizations

For Big Data companies, this shift is fundamental. Data is no longer consumed only by humans. Increasingly, it is queried by agents, interpreted by agents, and acted upon by agents. A chatbot can explain your data platform. An agent can operate it. This changes everything. Data engineers design capabilities, not just schemas. APIs become first-class AI interfaces. Governance shifts from access control to action control.

The technology behind modern Agents

Modern platforms are making this shift easier to implement in real-world systems. One example is Vertex AI Agent Builder, which provides tools for building production-ready AI agents.

The key idea is simple: combine language models with structured execution mechanisms.

Two important components make this possible:

Reasoning

Reasoning enables the model to break down a request into steps. Instead of responding immediately, the system evaluates:

- What is the user trying to achieve?

- Which tools or APIs are required?

- What sequence of actions should be executed?

This structured thinking allows agents to solve more complex tasks than a simple question-answer model.

Action Handlers

Action Handlers act as the bridge between an AI model and real systems. They allow an agent to interact with external services such as data pipelines, cloud storage, monitoring systems, databases, and internal APIs.

For example, an agent can query BigQuery for dataset statistics, trigger a Dataflow job, restart a failing pipeline, or generate a monitoring report. In other words, the agent doesn’t just describe the system - it can actually operate it.

Why this matters for Data Platforms

In Big Data environments, engineers often spend significant time performing repetitive operational tasks:

- Investigating failed jobs

- Running diagnostics

- Collecting logs

- Generating reports

- Orchestrating workflows

AI agents can automate many of these tasks by combining context awareness, reasoning, and system-level access.

Instead of asking:

"What went wrong with my pipeline?"

You might simply say:

"Investigate the failure, fix the issue if possible, and notify the team."

The agent can then analyze telemetry, identify the root cause, and execute corrective actions.

This shift dramatically changes the role of conversational interfaces. The chat window becomes a control interface for complex systems, not just a place to ask questions.

From conversation to collaboration

The rise of AI agents marks the end of simple chat interfaces. Chatbots taught us how to talk to AI. Agents allow us to work with AI. The difference is simple. Chatbots answer questions. Agents accomplish goals. For data teams and engineers, this opens the door to a new generation of tools, systems that don’t just assist, but actively participate in workflows. In the coming years, the most powerful AI systems will not be the ones that talk best. They will be the ones that reason, act, and deliver results.

Ready to move from chatbots to AI Agents?

If you're building a data platform or exploring how to integrate AI agents into your workflows, we can help. We design and implement AI-driven systems that go beyond simple chat interfaces and actively operate on your data. Let’s talk about your use case and explore what AI agents can do in your environment. Contact us to get started!